AI adoption is accelerating. Good AI and AI strategies? Much less so.

McKinsey’s 2025 Global Survey found that 88% of organisations now use artificial intelligence in at least one business function. At the same time, only about 7% have fully scaled it. MIT’s research went further: roughly 95% of generative AI pilots deliver zero measurable return on P&L. Thirty to forty billion dollars of enterprise investment and the vast majority of it stuck in pilot purgatory.

So the technology works and adoption is real, but somewhere between “let’s try AI” and “AI is generating business value” something keeps breaking. Repeatedly. At scale. With impressive budgets attached.

That something is AI strategy.

Enterprise AI strategies determine whether AI becomes a capability your organisation can build on or an expensive experiment that gets shelved next quarter when someone asks “wait, what did we get from this?”.

Good AI, the kind that aligns with business goals, scales and creates measurable value that is an outcome of disciplined execution. And discipline, as it turns out, is harder to buy than a language model subscription.

Here are 8 enterprise AI strategies that separate the 5% from everyone else.

1. Business-First AI Strategy

Before anyone picks an AI model or evaluates AI tools there’s a question that deserves an answer: what core business problems are we solving?

Good AI strategies starts with clarity on priorities, growth objectives, margin pressures, risk exposure and customer outcomes. If an AI initiative can’t trace a direct line back to your business objectives and corporate strategy, it probably shouldn’t be funded.

That sounds ruthless, but consider the alternative: MIT’s research found that over half of enterprise AI budgets go to sales and marketing tools (the most visible category) while back-office automation consistently delivers higher ROI.

Investment follows hype when business strategy isn’t leading the conversation. And hype, for the record, has never once appeared on a balance sheet as an asset. Align AI strategies with what the business needs and only then choose the technology. The sequence feels obvious but the number of organisations that do it backwards suggests otherwise.

2. Use-Case Prioritisation Strategy

There are always more AI use cases than time, budget or patience to implement them. Always. The 2025 AI Governance Benchmark Report found that 80% of enterprises have fifty or more generative AI use cases in their pipeline. Most have only a handful in production.

The gap between “ideas” and “shipped” is where enterprise AI goes to age.

Leading organisations sequence their AI initiatives deliberately. They identify high-impact domains, pick the ones with the best ratio of effort to business value, prove those work and build momentum from there. Scattered experimentation is how you end up with twelve AI projects, three conflicting analytics dashboards and a proof-of-concept that nobody remembers commissioning. Every one of those projects has a Slack channel, a project lead and a monthly update meeting. None of them have shipped.

Good AI is curated. Pick a boring expensive problem, automate it, measure what changed and then expand.

3. AI Operating Model Strategy

Structure determines whether AI scales or stalls, and this is where many enterprises hit a wall that has nothing to do with technology. Good AI strategies involve getting the operating model right before scaling anything.

An AI operating model needs to define decision rights, accountability, funding authority and cross-functional coordination:

- Who approves new AI projects?

- Who owns outcomes after deployment?

- Who decides when to kill something that isn’t working?

Without clear answers, AI initiatives drift into the organisational equivalent of a shared Google Doc where everyone has edit access and nobody owns the final version. And the longer ownership stays unclear, the worse it gets. Projects duplicate because nobody knew someone else was already doing the same thing. The waste isn’t dramatic but administrative, and that’s why it survives so long without anyone noticing.

The typical options are centralised (one team controls everything), federated (business units run their own AI) or hybrid. Each has tradeoffs.

- Centralised models offer consistency but can bottleneck every decision through a single team that becomes the busiest people in the building;

- Federated models move faster but risk duplication: three teams building three chatbots for three slightly different versions of the same problem;

- Hybrid approach tries to balance both and (like most compromises) works best when the governance framework around it is solid.

Pick one, define the roles, revisit as AI maturity grows. The worst choice here is no choice because ambiguity doesn’t pause while you figure it out, it just creates more pilots.

4. Governance and Responsible AI Strategy

Governance gets a bad reputation. People hear the word and picture slow approvals, thick policy documents and “compliance” repeated until it loses all meaning, but in the AI context governance is what keeps things from going wrong in expensive and public ways.

The PEX Report 2025/26 found that only 43% of organisations have a formal AI governance policy in place, while 29% have none at all. Meanwhile, Deloitte’s 2026 State of AI report found that only one in five companies has a mature governance model for autonomous AI agents, even as agentic AI adoption accelerates quickly. That’s like building faster cars while removing the brakes. Technically impressive, strategically questionable.

Responsible AI means embedding data controls, ethical AI, compliance oversight and model monitoring into how AI systems operate from day one.

- Address ethical risks before they become headlines.

- Establish data protection standards before a regulator asks about them.

- Monitor AI outputs continuously, because a model that worked well in January might hallucinate confidently by March.

Governance done well is the reason you can deploy without worrying that something is going sideways. Governance done badly (or not done at all) is the reason your competitor is in the news for the wrong reasons.

5. Data and Technology Foundation Strategy

AI cannot outperform its foundation. You can deploy the most sophisticated machine learning models available, and if they’re trained on messy, incomplete or contradictory data, the AI outputs will reflect exactly that. Garbage in, confident garbage out!

Data quality, interoperability, infrastructure scalability and platform standardisation is the groundwork that separates robust AI from expensive disappointment. And you can’t redesign business processes on top of fragmented, siloed data. If data lives in four systems and disagrees with itself in three of them, your AI will confidently act on whichever version it sees first or likes more. It won’t ask for clarification, it will just be wrong, fluently.

This also means managing data governance alongside AI governance.

- Know where your sensitive data lives;

- Know who can access it;

- Know which data your AI systems are training on.

When you skip this step, you tend to discover the problem later, usually in front of a regulator or on the front page of something you’d rather not be on.

Data preparation typically consumes 40-60% of AI project budgets and up to 70% of data science teams’ time. That ratio feels backwards until you’ve watched a perfectly capable AI system produce nonsense because nobody cleaned the inputs.

6. Talent and Capability Development Strategy

Insufficient worker skills is one of the biggest barriers to integrating AI into existing workflows. The technology is ready but the people strategy, in many cases, is only catching up.

AI maturity is organisational maturity. It means:

- AI literacy across leadership (not just the data science team) so that executives can evaluate AI initiatives without defaulting to “sounds impressive, approved”;

- Technical depth in core teams so engineers and analysts can work alongside AI systems rather than route around them;

- Continuous upskilling, because AI tools evolve so fast that last year’s training is already a historical document.

McKinsey’s data shows that high-performing organisations are three times more likely to report strong senior leadership engagement with AI. That engagement goes beyond signing off on budgets. It means leaders who role-model use of AI set the vision and make governance decisions rather than delegating them into a committee that meets every other Thursday.

Successful AI strategies were driven by empowered line managers, not centralised AI labs. The people closest to the problems usually know which problems are worth solving. Give them the skills and the permission to use AI and adoption stops being a top-down mandate and starts being something that sticks.

7. Portfolio and Investment Strategy

AI investments should be managed the way any serious portfolio is: with balance, discipline and regular rebalancing.

That means:

- Allocating across efficiency gains and growth initiatives;

- Short-term ROI and long-term positioning;

- Incremental improvements and entirely new AI capabilities;

- Courage to defund things that aren’t working which is harder than it sounds when someone’s reputation is attached to a pilot that’s been running for nine months with no clear key performance indicators.

8. Dynamic Iteration and Change Strategy

An enterprise AI strategy written in 2025 and left untouched by 2027 will be irrelevant. Markets evolve, technology follows. Your strategy has to keep pace or it becomes a very well-formatted artefact from a different era.

- Establish review cycles;

- Build performance tracking into every initiative from the start;

- Create feedback loops where the people using AI tools can report what works, what doesn’t and what’s changed since deployment;

- Build capital reallocation mechanisms so budgets follow performance rather than staying locked to last quarter’s plan.

If you still review their AI strategy annually, you are reviewing a document that was outdated by the time the meeting started.

The best enterprise AI strategies treat iteration as a feature. Getting it right the first time is a fantasy, but getting better continuously is how AI success happens.

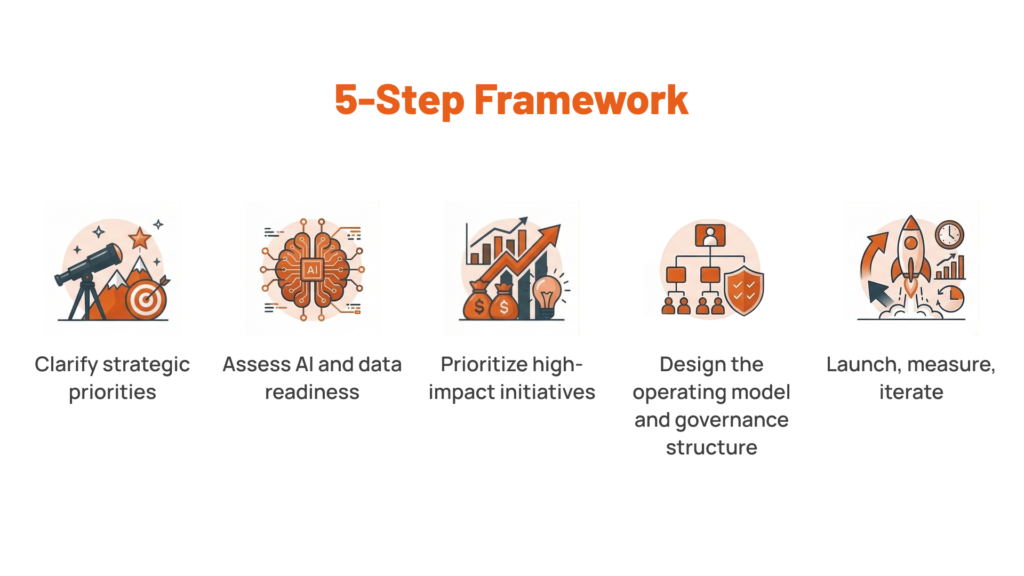

A Practical Framework for Creating Good AI

If the eight strategies above feel like a lot (and they are), here’s the condensed version. Five steps to create an effective AI strategy:

This won’t win awards for novelty. But if you follow it step by step without skipping the boring parts, you will end up on the right side of that 95/5 divide.

What Comes After Strategy

Enterprise AI strategies fail because there are too many pilots without ownership, too much investment without alignment, too much technology without architecture, too much enthusiasm without a governance framework. The patterns are well-documented. That 95% failure rate is alarming, but it’s also useful because it tells you exactly what to avoid.

Good AI is the outcome of everything that comes before the model: the business alignment, the operating model, the data foundation, the talent, the governance, the portfolio discipline, the willingness to iterate. Get those right and the AI part becomes the relatively straightforward part.

At Lerpal, we approach AI strategy the way we approach everything: architecture first, execution always. We help organisations build the conditions for AI to work – the operating models, the data infrastructure, the governance structures, the delivery capability – so that when AI enters the picture it has somewhere solid to land.

Good AI comes from better foundations. And foundations, as it happens, are what we build.

Explore how Lerpal helps organisations create the conditions for AI that works.